Generative AI Tutorial – Slide 17

Explanation of the concept shown in Slide 17, including real examples, applications, and a technical breakdown.

Explanation of the concept shown in Slide 17, including real examples, applications, and a technical breakdown.

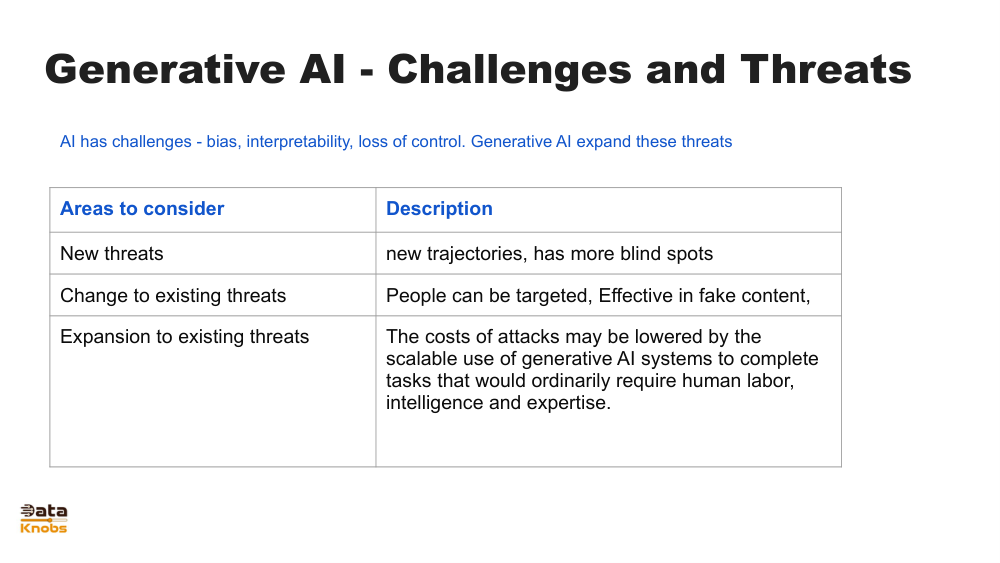

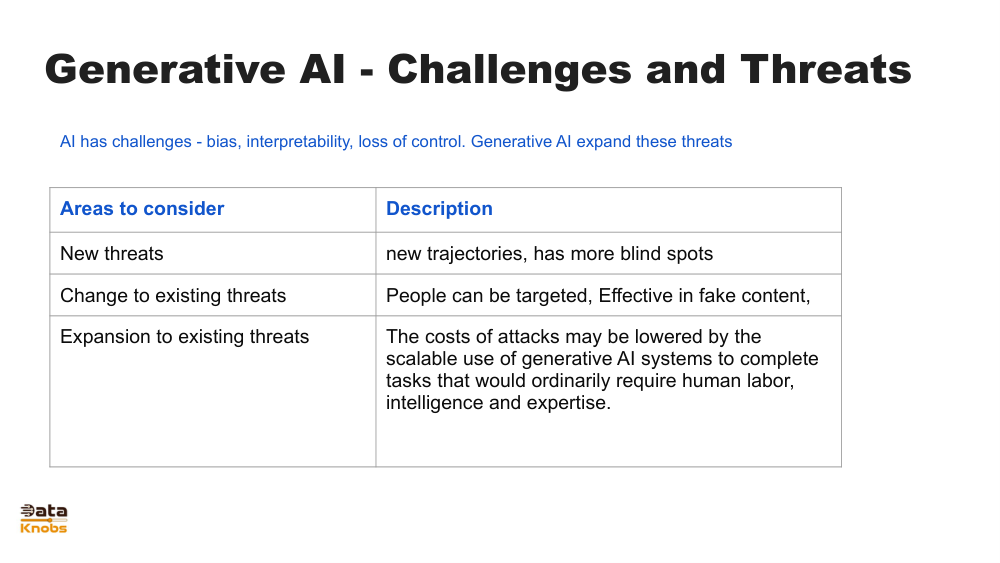

Slide 17 explains how Generative AI models create new outputs by learning patterns from existing data. It highlights the idea of moving from raw data into high‑level abstract representations and then decoding these representations into meaningful outputs such as text, images, or audio.

The model transforms input data into dense vector representations capturing essential meaning and structure.

The model identifies relationships and patterns between tokens, pixels, or signals across vast datasets.

The internal representation is decoded step‑by‑step to produce new text, images, or other output formats.

Text, images, or signals are fed into the model.

Data is converted into vector embeddings.

Layers of neural networks refine meaning.

The model generates a new coherent result.

Writing assistance, code generation, story creation.

AI art, product mockups, concept design.

Creating synthetic datasets for training models.

It visualizes how internal AI representations transform input into structured output.

They compress meaning into vectors that models can process efficiently.

Yes, from GPT to diffusion models, all rely on internal learned representations.

Explore deeper topics, architectures, and hands‑on examples.

Next Lesson