Generative AI – Core Concept Explained

Understanding how AI models generate new content from learned patterns.

Understanding how AI models generate new content from learned patterns.

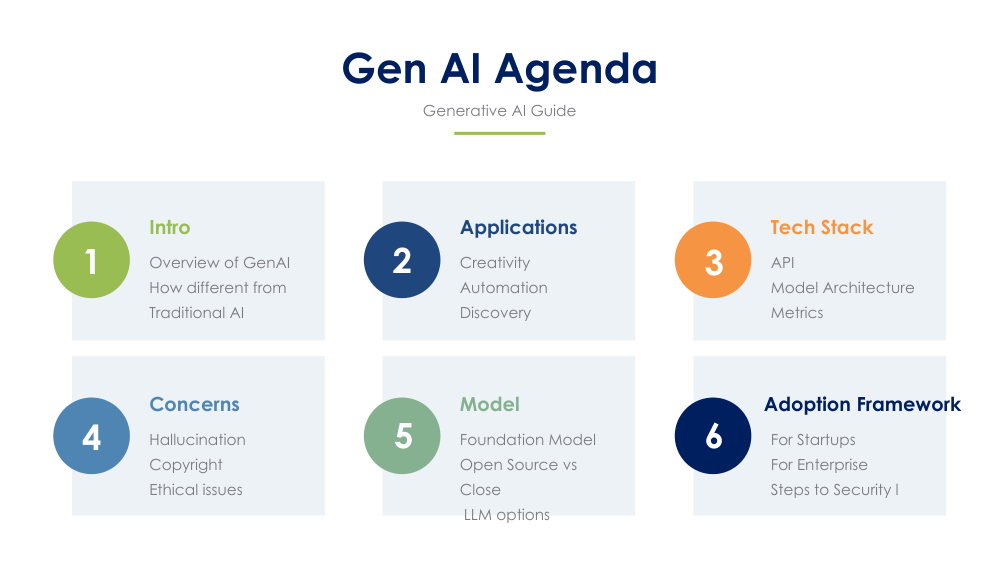

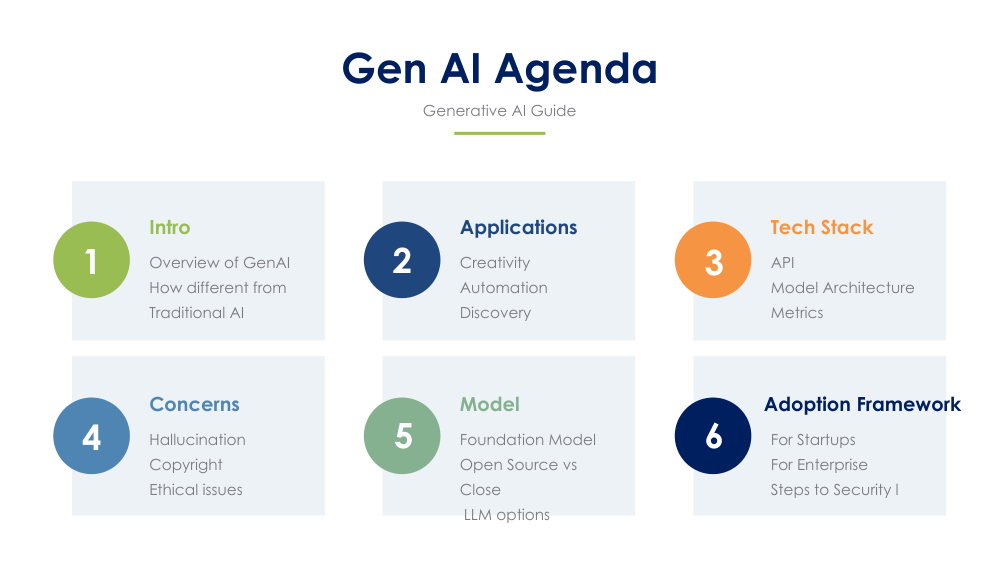

Slide 2 illustrates the fundamental idea of Generative AI: models learn from vast datasets and generate new output that resembles the training data. This includes text, images, audio, or code.

Models absorb statistical patterns from data and embed them in high‑dimensional representations.

Generation is guided by probability distributions, enabling varied and creative results.

A user input acts as a constraint that influences the model’s output direction.

Model ingests large datasets (text, images, audio).

Neural networks encode relationships and structure.

Model samples likely options token-by-token or pixel-by-pixel.

Final content is generated based on learned distributions.

Articles, summaries, chatbots, and creative writing.

Art, concept design, product mockups.

Autocompletion, debugging, and rapid prototyping.

It learns statistical structure, not memorized copies.

Generative models rely on probabilities and can explore variations.

No. Even with the same prompt, sampling introduces variation.

Continue exploring deeper layers of how these models work.

Next Slide