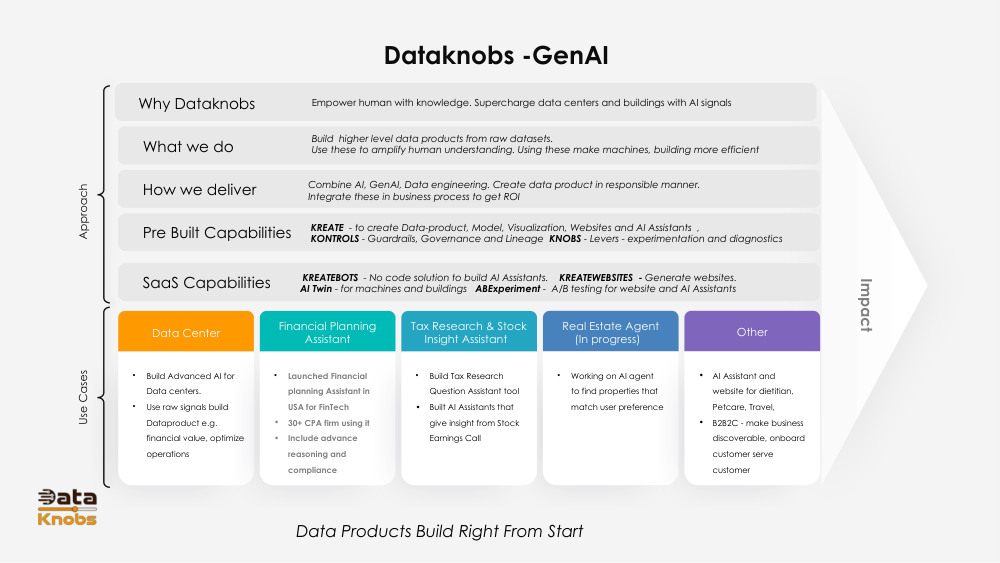

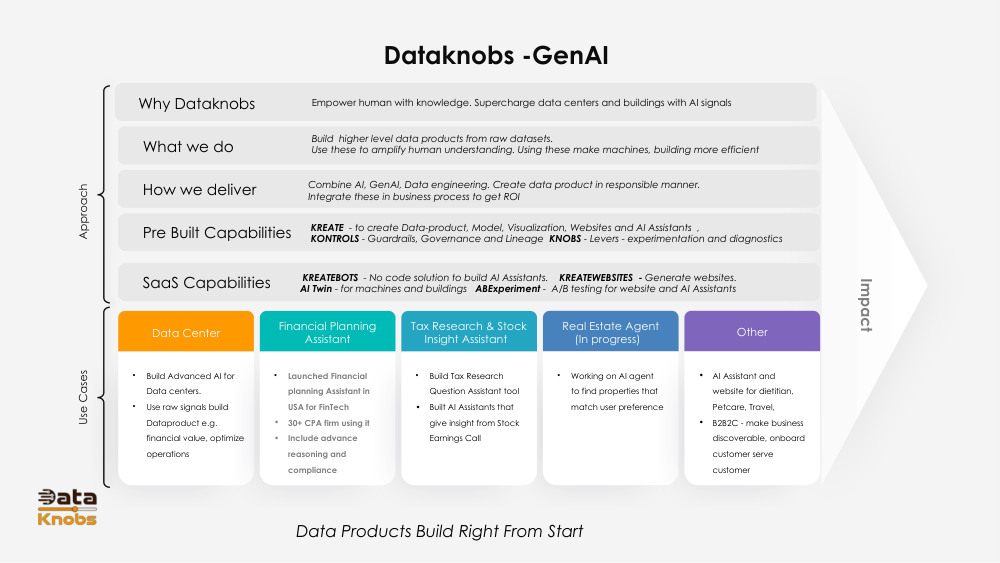

Generative AI: Understanding Slide 5

A clear explanation of the core concept shown in Slide 5 with examples, applications, and technical insights.

A clear explanation of the core concept shown in Slide 5 with examples, applications, and technical insights.

Slide 5 illustrates how Generative AI models learn complex data patterns and use them to create new, coherent outputs. This slide emphasizes the relationship between input data, learned representations, and generated content.

Generative models identify patterns in large datasets, learning structures like grammar, visual shapes, or audio sequences.

The model compresses information into a latent space, representing concepts mathematically so it can manipulate them creatively.

Using learned representations, the model produces new text, images, audio, or code that mimic the style of the training data.

Large datasets (text, images, audio) enter the model for learning.

The model adjusts internal parameters to reduce prediction errors.

The model forms abstract concepts in a mathematical latent space.

New outputs are created by sampling and decoding latent patterns.

Chatbots, summarization, email drafting, creative writing tools.

Artwork creation, design prototypes, image restoration.

Voice synthesis, music composition, sound effects.

It visualizes how generative models transform training data into structured latent representations used for generation.

It allows models to manipulate concepts mathematically, enabling creative output beyond direct copies of training data.

It simulates creativity by recombining patterns, though it doesn’t experience intent or emotion.

Explore deeper techniques and build your own models.

Learn More