Generative AI Tutorial – Slide 6

Understanding how Generative AI models learn patterns and create new data.

Understanding how Generative AI models learn patterns and create new data.

Slide 6 illustrates how generative models learn from training data by identifying underlying patterns and then sampling from these patterns to produce new outputs. This slide emphasizes the transition from raw data to structured patterns, forming the foundation for generative capabilities.

Models learn statistical patterns from examples, such as language structure or image composition.

A compressed representation of learned features, letting models navigate and blend concepts.

The model generates new outputs by sampling possible combinations from learned patterns.

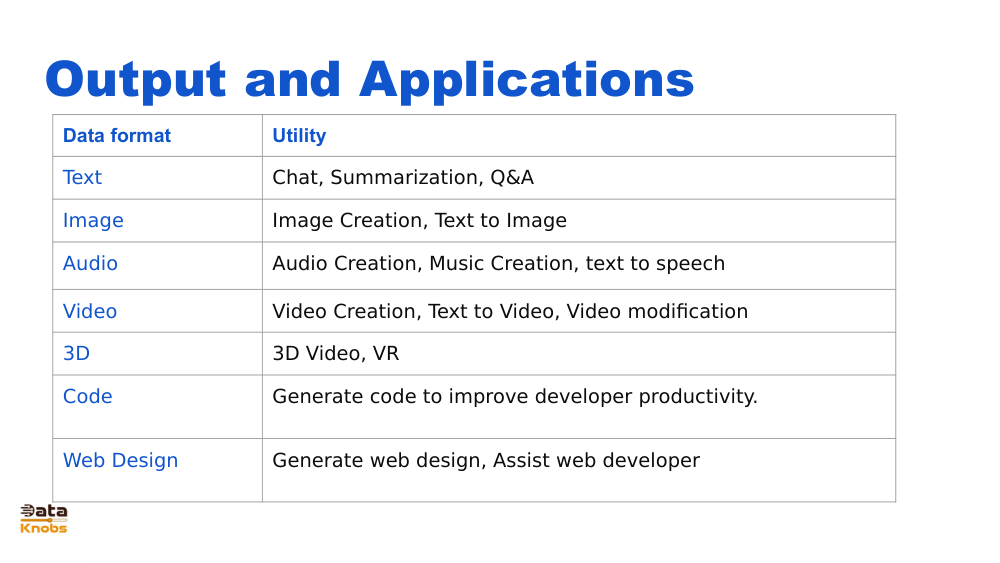

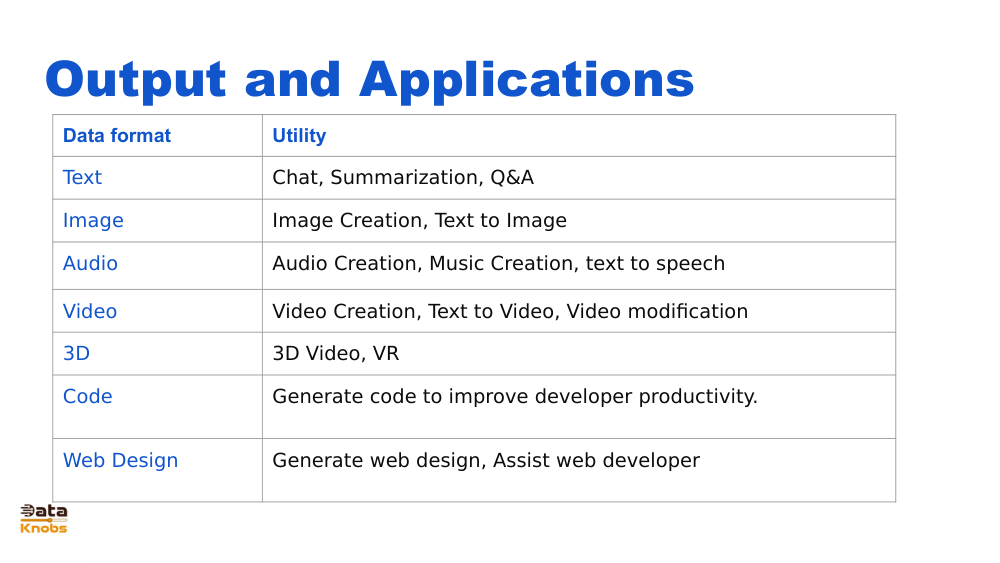

Images, text, audio, or combined data.

Model learns relationships and hidden structures.

Data compressed into numeric representations.

Model creates new content based on learned patterns.

Generating articles, marketing copy, or story drafts.

Creating artwork, illustrations, and visual prototypes.

Producing training data for ML systems without privacy issues.

Generative models such as Variational Autoencoders (VAEs), Generative Adversarial Networks (GANs), and Transformers operate on the principle of modeling probability distributions over data. Slide 6 emphasizes that once the distribution is learned, the model can sample new outputs from it.

A mathematical space where the model organizes features it has learned.

Sampling introduces randomness, allowing infinite possible outputs.

Well‑trained models generate patterns, not direct copies of training data.

Explore deeper Generative AI mechanics and build real applications.

Next Slide