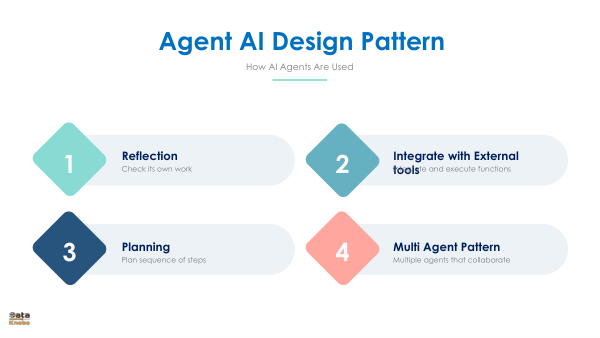

AI Agent

Design Patterns.

To build reliable and scalable autonomous systems, you must move beyond basic prompts. Discover the foundational architectural patterns that dictate how agents reason, plan, and execute tasks.