Advanced

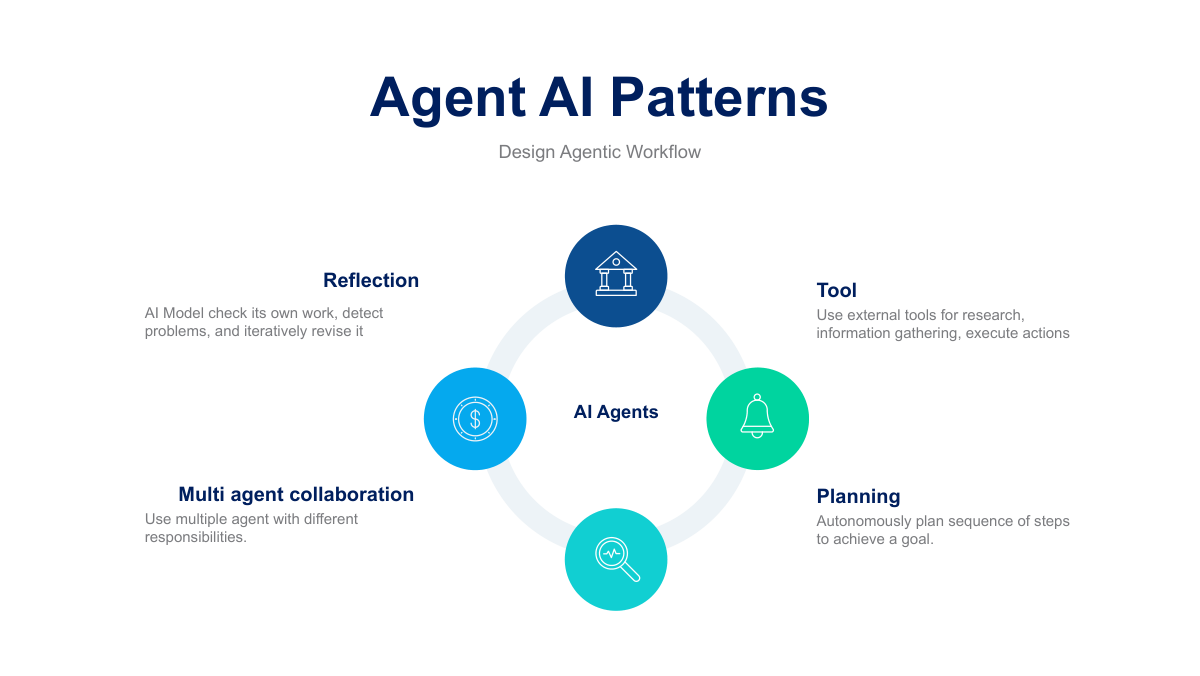

Agent Patterns.

To build highly reliable, enterprise-grade AI, you must orchestrate specific cognitive loops. Expand your understanding of Tool Use, Reflection, Planning, and Multi-Agent Collaboration.