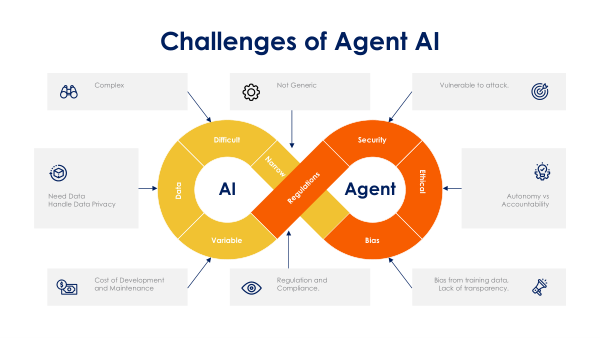

The Challenges of

AI Agents.

Giving an LLM the ability to take action introduces entirely new classes of risk. Explore the technical and operational hurdles that must be overcome to safely deploy autonomous systems in production.