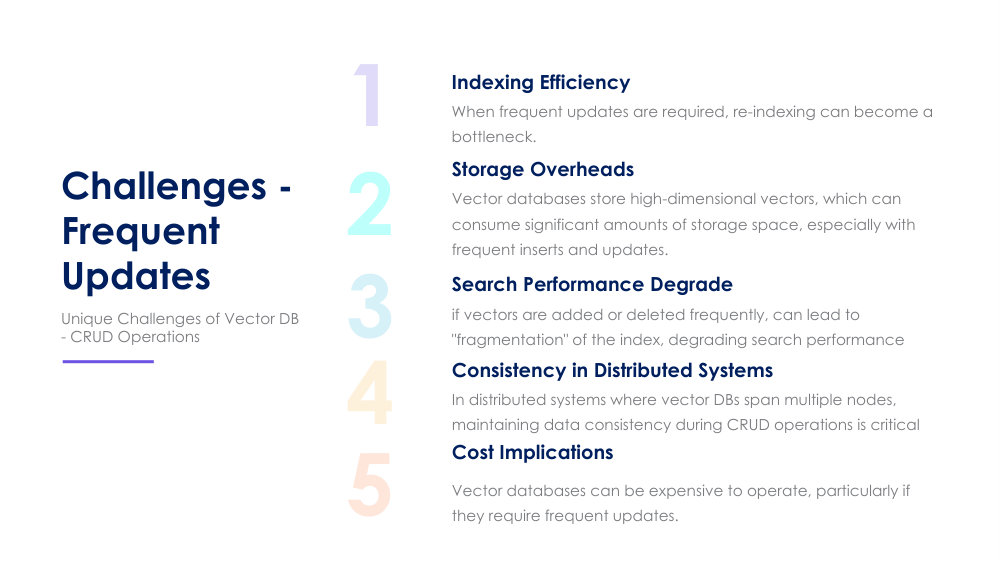

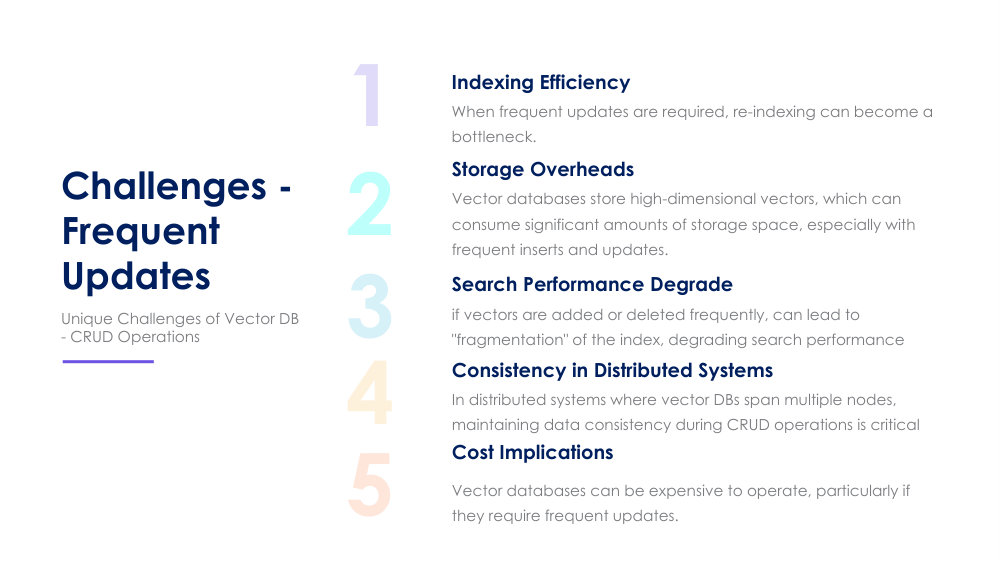

Indexing, storage overhead, performance, consistency, and cost — and how they affect evolving vector workloads.

Vector databases are optimized for high‑dimensional embeddings, but frequent updates introduce issues that impact efficiency, correctness, and total system cost. Understanding these challenges is key for designing scalable retrieval architectures.

Frequent inserts/deletes cause index fragmentation, requiring rebuilds or lazy updates that degrade recall.

Versioning, deleted vectors, and delayed compaction create inflated storage usage over time.

As indexes grow and become uneven, query latency increases and update operations become more expensive.

Ensuring consistency between vector embeddings, metadata, and indexes becomes harder with dynamic data.

Reindexing, storage expansion, and compute overhead increase the overall operational cost of frequent updates.

New or changed embeddings enter the system as inserts or updates.

Index performance drops until batch rebuilds or background merges occur.

Old vectors, tombstones, and duplicate nodes accumulate.

Background jobs for compaction and index maintenance consume CPU and memory.

More compute, more storage, and performance tuning increase costs.

Optimized for fast queries, minimal reindexing, lower costs.

Require continuous updates, reindexing, and higher resource usage.

Most vector index structures are optimized for static or batch updates, making incremental changes costly.

No. Some use append‑only logs, others rebuild indexes, and some rely on background compaction.

Fragmented indexes and unmerged nodes can lead to slower queries and reduced recall accuracy.

Learn best practices and tools for managing dynamic vector workloads effectively.

Learn More