Overview

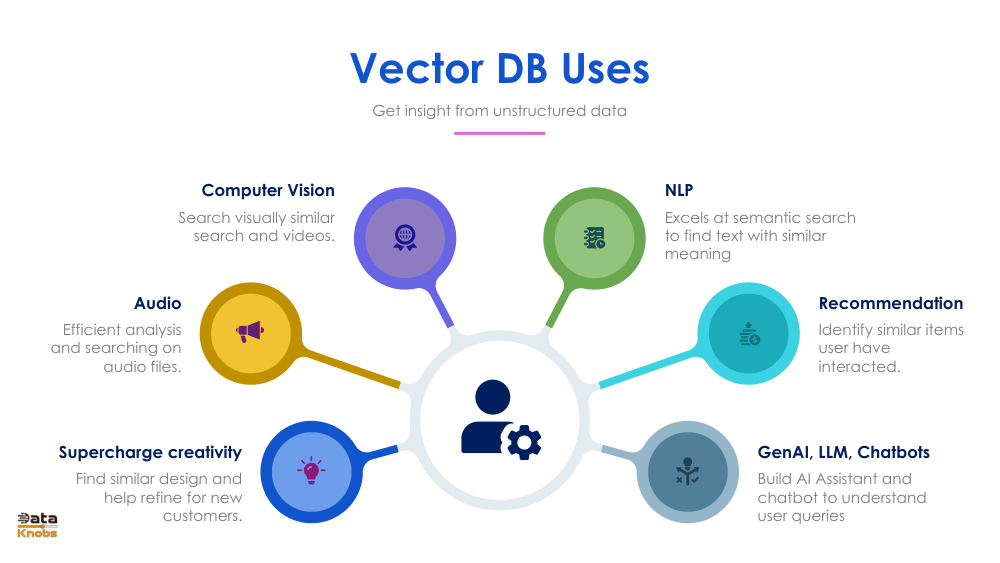

Vector databases store embeddings—numerical representations of text, images, audio, user behavior, and more. These embeddings enable machines to perform similarity search, classification, clustering, and retrieval at scale.

Modern AI applications rely heavily on vector databases for speed, accuracy, and relevance. Below are the core concepts and real-world use cases.