Overview

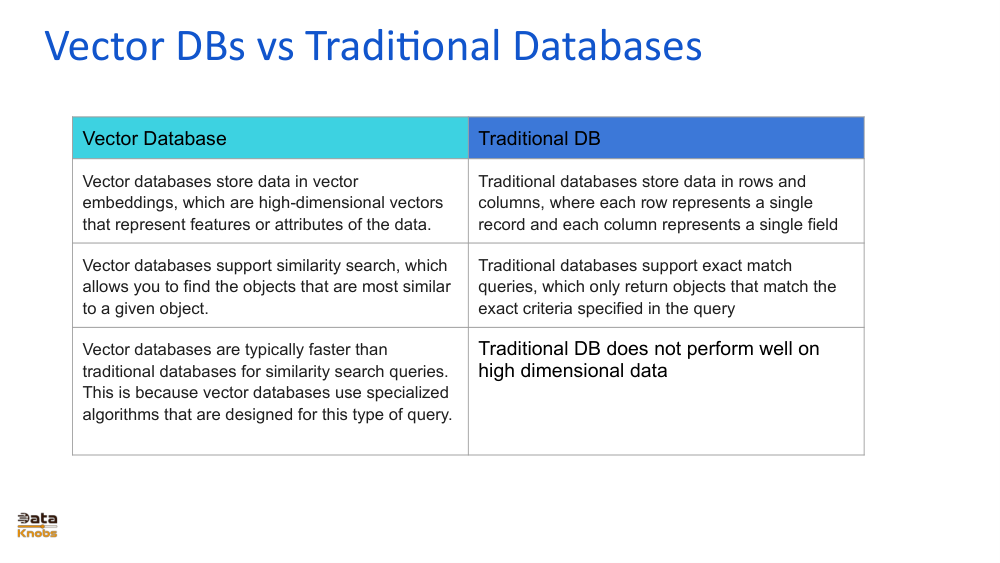

Traditional databases excel at structured data, exact matching, and transactional workloads. In contrast, vector databases store numerical embeddings representing meaning, enabling similarity search across high‑dimensional data.

This shift is critical for AI-powered search, recommendation systems, and applications where semantic relationships matter more than exact matches.