Overview

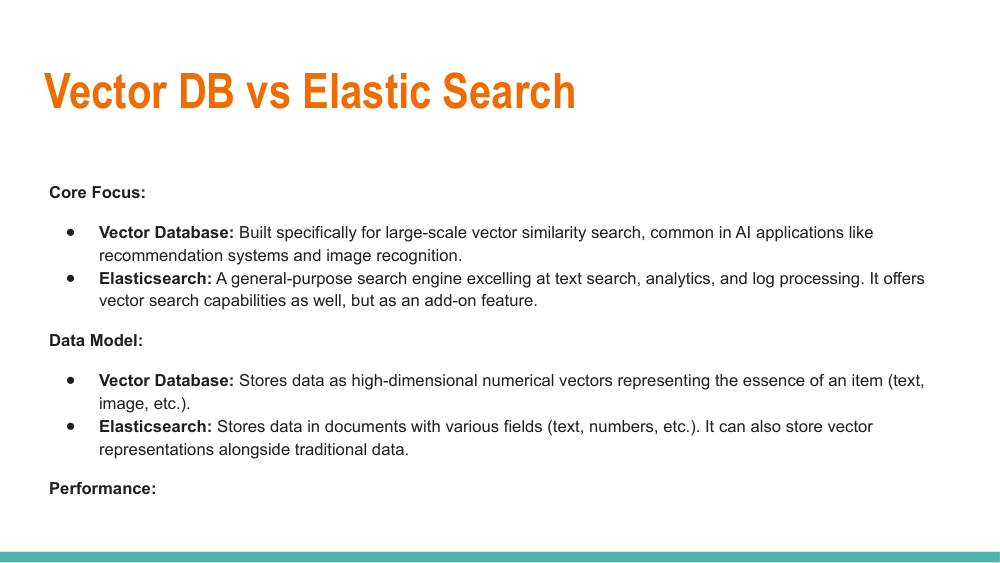

Search workloads have expanded beyond traditional keyword matching. As AI-powered applications grow, organizations must decide between classical text search engines like Elasticsearch and specialized vector databases optimized for high-dimensional embeddings.

This page breaks down the core differences between both technologies so you can choose the right one for your use case.