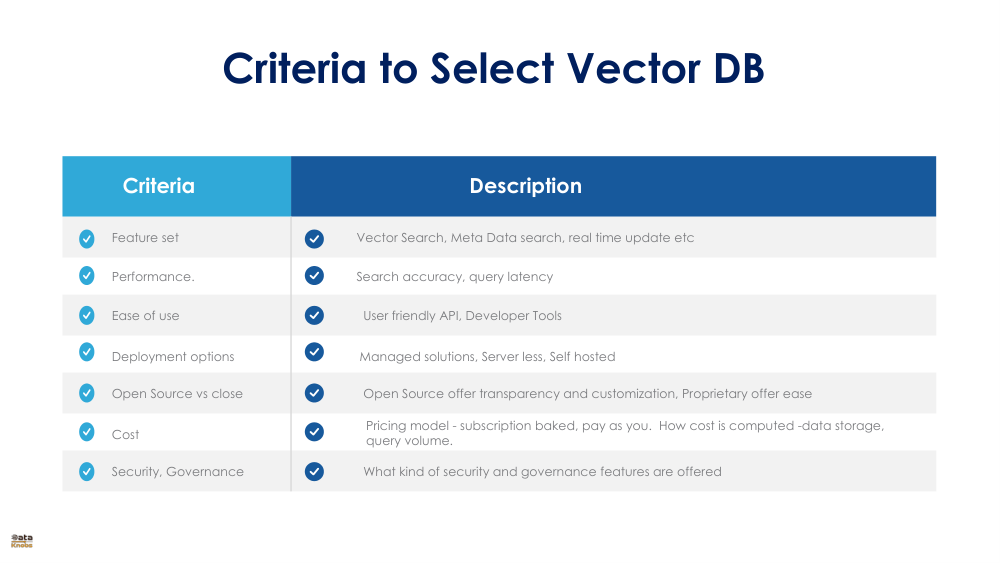

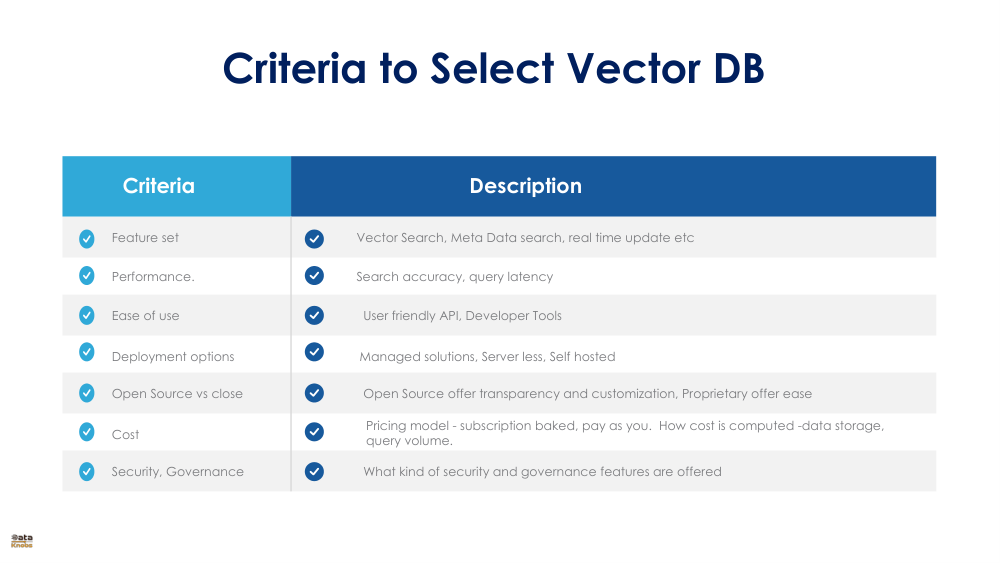

Criteria to Select a Vector Database

Understand the essential factors like scalability, performance, deployment, security, and ecosystem support when choosing a vector database for AI and retrieval applications.

Get Started

Understand the essential factors like scalability, performance, deployment, security, and ecosystem support when choosing a vector database for AI and retrieval applications.

Get Started

Vector databases are essential for similarity search, recommendation systems, embeddings storage, and retrieval-augmented generation. Selecting the right one requires understanding how well it scales, performs, secures data, fits your deployment stack, and integrates with tools in your ecosystem.

Supports growing volumes of embeddings, horizontal sharding, and multi-node expansion.

Fast vector search, low latency retrieval, and optimized ANN indexing structures.

Supports cloud, on‑prem, hybrid, SaaS, and edge environments.

Authentication, encryption, VPC support, audit logs, and enterprise compliance.

Works seamlessly with AI frameworks, LLMs, MLOps tools, and data pipelines.

Define expected scale: number of vectors, dimensionality, QPS requirements.

Benchmark search performance using real-world workloads.

Assess deployment options that fit organizational constraints.

Verify security posture and compliance readiness.

Check API ecosystem, SDK availability, and community support.

Store and retrieve embeddings for LLM grounding.

Serve personalized content at scale.

Improve search accuracy using vector similarity.

Not always, but they offer significant speed and scalability advantages over traditional databases.

Depending on architecture, from millions to trillions across distributed clusters.

Many do, combining keyword and vector search for improved relevance.

Use these criteria to evaluate platforms confidently and build high‑performance AI systems.

Explore More Guides