Generative AI Tutorial – Slide 22

A deep explanation of the concept illustrated in Slide 22, including examples, applications, and technical insights.

A deep explanation of the concept illustrated in Slide 22, including examples, applications, and technical insights.

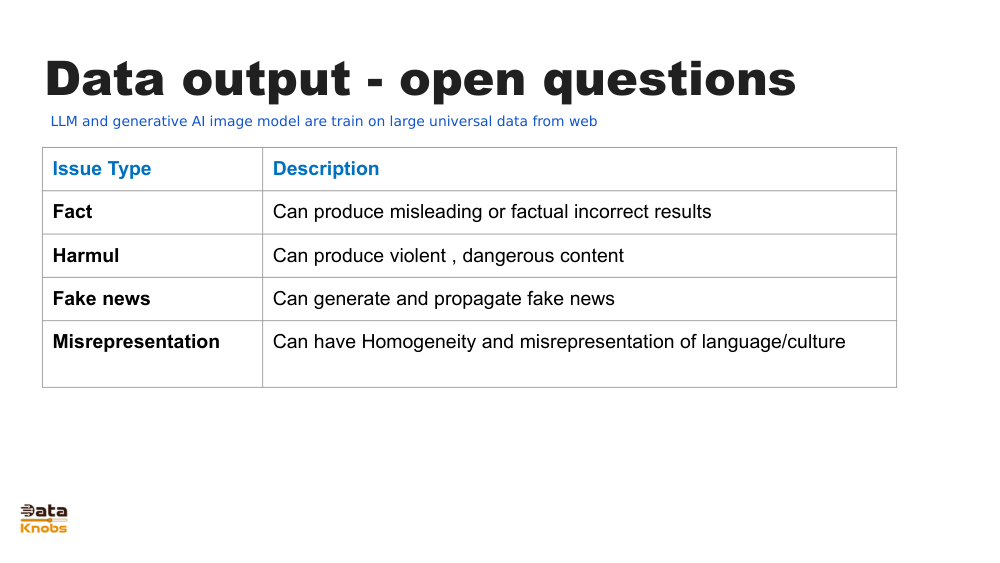

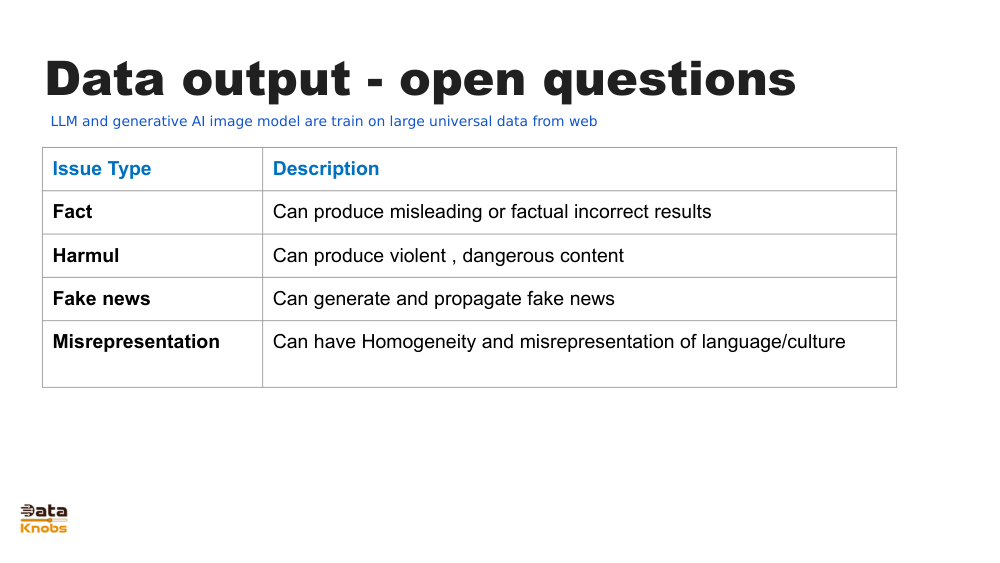

Slide 22 typically illustrates how generative models transform inputs into outputs by learning patterns, structures, and relationships within data. The visual often highlights a model pipeline where prompts or raw data travel through neural network layers to produce novel outputs such as text, images, or structured content.

Models learn internal representations of language, images, or patterns that enable them to generate coherent outputs.

User prompts guide model behavior. The slide shows how input tokens move through layers to form predictions.

Models choose the most likely next token based on learned probability distributions.

Prompt or data is tokenized and prepared for processing.

Model converts tokens into vector embeddings representing meaning.

Neural layers compute probabilities and generate new tokens step‑by‑step.

Tokens are decoded into text, images, or other content formats.

Chatbots, storytelling, report writing, summarization.

Artwork creation, product design, marketing mockups.

Auto-completing functions, generating boilerplate, debugging assistance.

Synthetic datasets for testing, training, privacy-preserving analytics.

It visualizes the transformation of input prompts through a generative model into outputs, showing token flow and model structure.

The model selects next tokens based on probability distributions learned during training.

It learns patterns and associations, not human-level understanding.

Continue exploring deeper tutorials and hands-on examples.

Continue Learning