Generative AI Tutorial – Slide 48

A clear explanation of the concept illustrated in Slide 48, including examples, applications, and the technical reasoning behind the idea.

A clear explanation of the concept illustrated in Slide 48, including examples, applications, and the technical reasoning behind the idea.

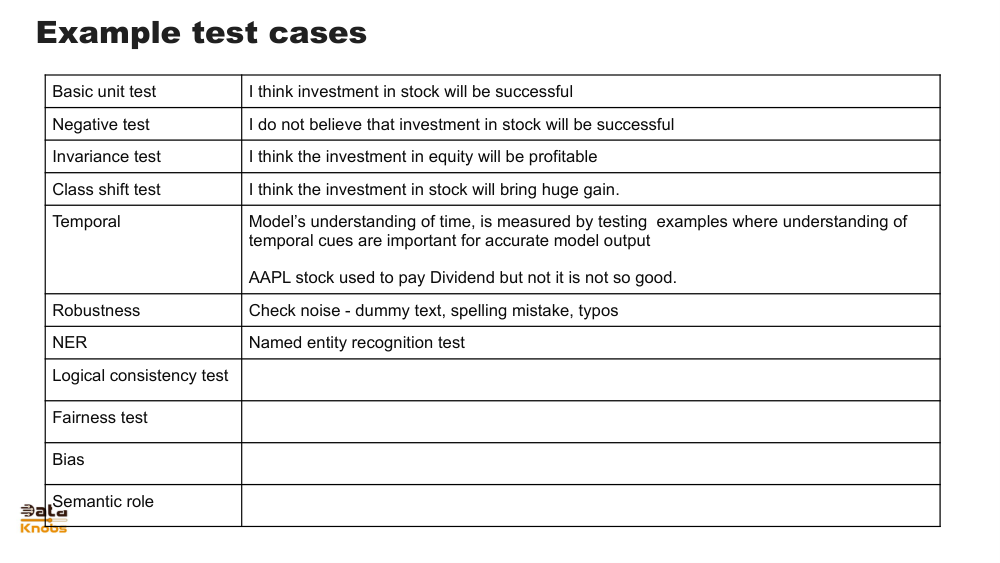

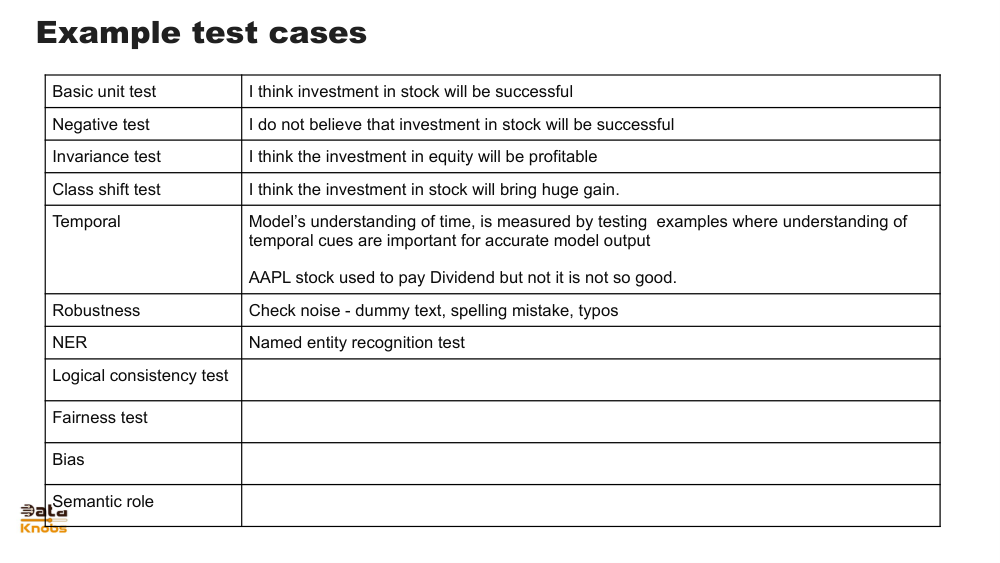

Slide 48 typically presents the concept of model refinement in Generative AI, focusing on improving model outputs through iteration, evaluation, and fine‑tuning. This includes methods such as reinforcement learning, prompt engineering, and feedback loops that strengthen the accuracy and alignment of generated content.

Models improve through structured user or system feedback, shaping future outputs.

Quality, relevance, and safety metrics guide model updates and ensure output reliability.

The model adjusts responses over multiple cycles to better align with expectations.

1. Input

User provides prompt or training data.

2. Model Output

The model generates predictions or content.

3. Evaluation

Quality is assessed via metrics or human review.

4. Refinement

Model weights or prompts are adjusted for improvement.

Chatbots refine answers based on user satisfaction and corrective input.

Models reduce artifacts or inaccuracies through iterative updates.

Refine code suggestions through error checking and regression tests.

It ensures models stay accurate, safe, and aligned with user goals.

Not always. Some refinements happen through prompt tuning or reinforcement learning without full retraining.

It is highly valuable and often leads to more aligned and useful model outputs.

Explore more slides, tutorials, and deep dives into AI concepts.

View More Tutorials