Generative AI – Slide 45 Explained

A clear breakdown of the concept illustrated in Slide 45, including examples, applications, and the underlying technical reasoning.

A clear breakdown of the concept illustrated in Slide 45, including examples, applications, and the underlying technical reasoning.

Slide 45 focuses on how generative AI transforms an input prompt into an output through iterative prediction and refinement. The concept highlights model behavior, token-level generation, and the flow of information from input encoding to final decoded output.

The model predicts the next token based on previous tokens, building the output sequentially.

Input text is converted into high‑dimensional vectors, enabling the model to interpret meaning and context.

The model uses attention mechanisms to reference relevant past tokens for coherent and context-aware generation.

The input prompt is tokenized and converted into embeddings representing meaning and context.

The model processes the embeddings through transformer layers, applying attention to understand semantic relationships.

The decoder predicts the next token with probabilities, selects one, and appends it to the output sequence.

The model repeats the prediction loop until the generated output is complete or a stop token is reached.

Blog posts, emails, scripts, and creative writing.

Auto-writing functions, fixing bugs, and generating documentation.

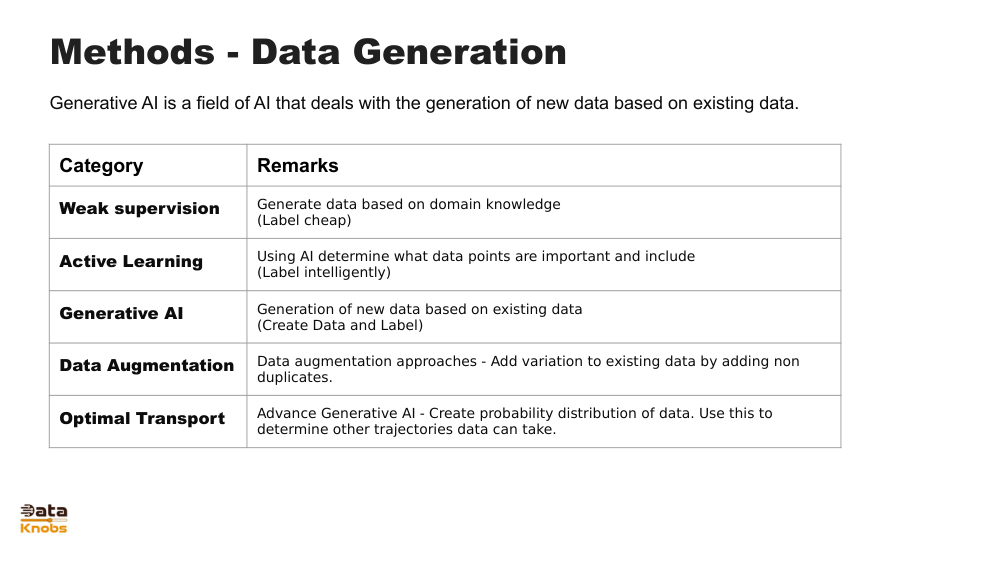

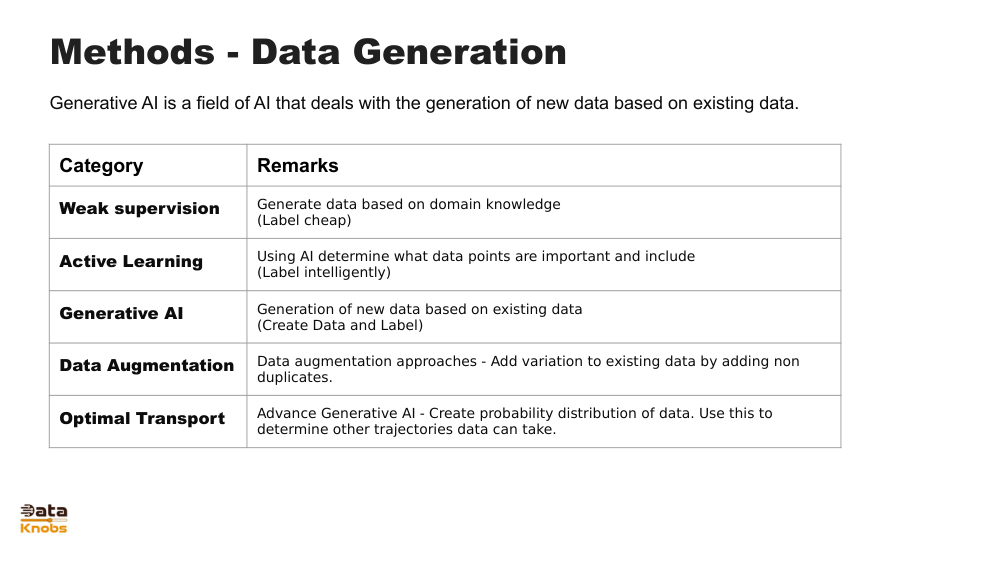

Creating sample datasets, synthetic images, or training data.

This allows the model to adapt each step based on previously generated content, improving coherence.

Temperature, top‑k, and top‑p sampling influence randomness and creativity.

It identifies patterns and relationships in data, which simulate understanding but are statistical in nature.

Explore more slides, tutorials, and hands-on examples.

View Next Slide