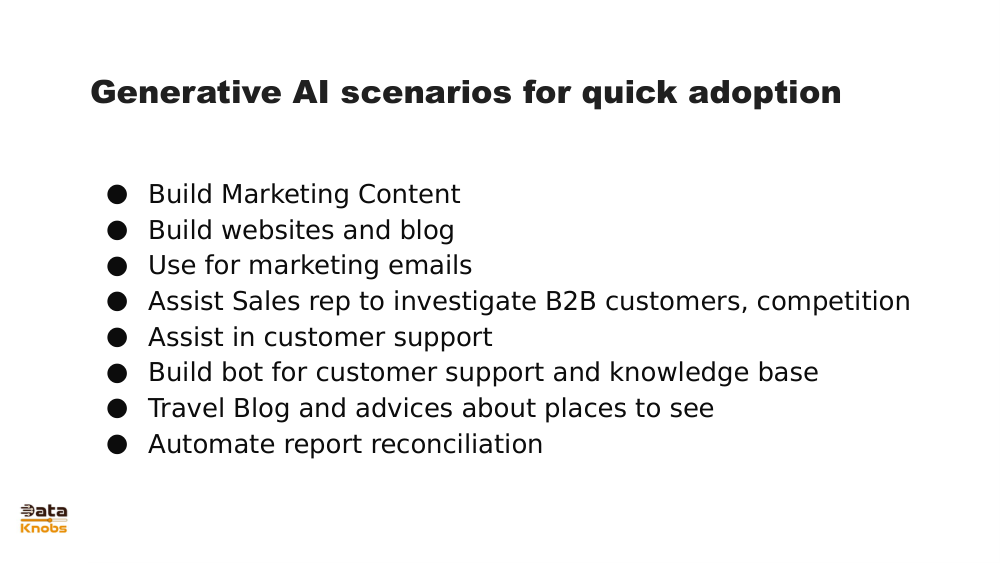

Generative AI – Slide 56 Concept Explained

A clear breakdown of the technical idea shown in the slide, with examples, applications, and how it works.

A clear breakdown of the technical idea shown in the slide, with examples, applications, and how it works.

Slide 56 illustrates how generative AI systems use trained models to transform an input signal into a predicted or generated output. It highlights the relationship between a model’s learned representation and the produced result.

Raw data (text, image, audio) converted into numerical vectors that the model can interpret.

Slide 56 emphasizes how internal layers learn patterns and relationships, enabling prediction.

The model produces new content that aligns with the input and its learned patterns.

The user provides an input, such as text or an image.

The model transforms the input into embeddings that represent meaning or features.

The network processes embeddings through layers that refine predictions.

The output is generated: text, image, audio, or another modality.

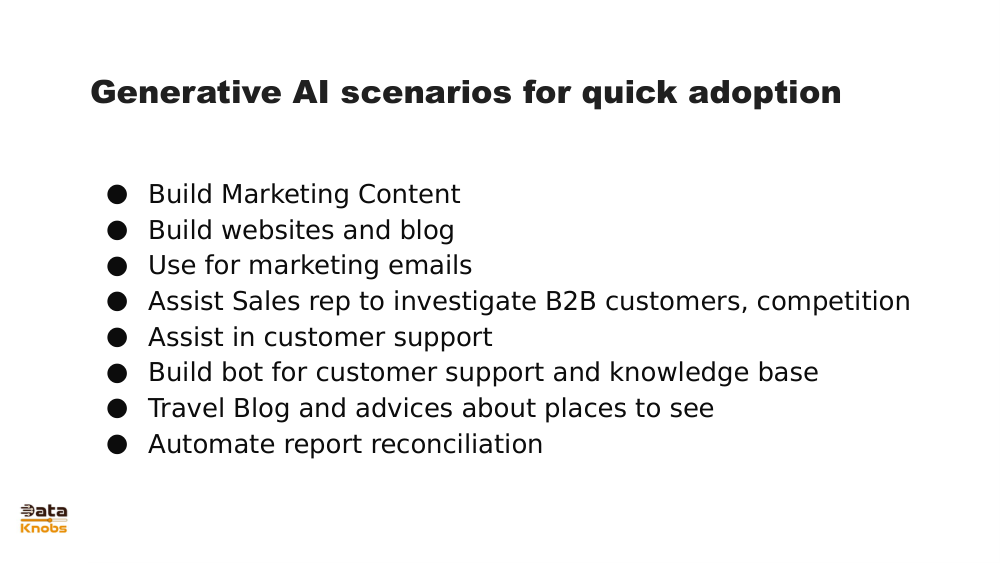

Articles, code, advertising copy, character dialogue.

Artwork, product renderings, visual concepts.

Question answering, summarization, tutoring.

Predicts labels or categories; focuses on classification.

Creates new content; predicts likely next tokens or pixel patterns.

It shows how generative AI maps inputs to structured outputs through learned internal representations.

Yes, although the architectures differ, both rely on embedding input into numerical representations.

Explore more slides, examples, and hands‑on practice.

Next Lesson