Generative AI – Slide 35 Explained

A clear breakdown of the concept illustrated in Slide 35 with real-world applications and technical insights.

A clear breakdown of the concept illustrated in Slide 35 with real-world applications and technical insights.

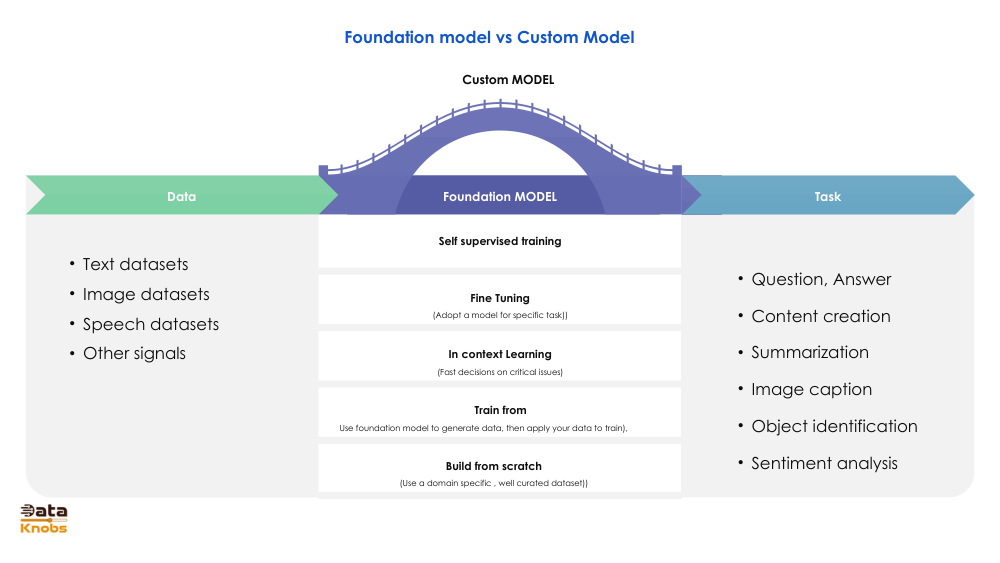

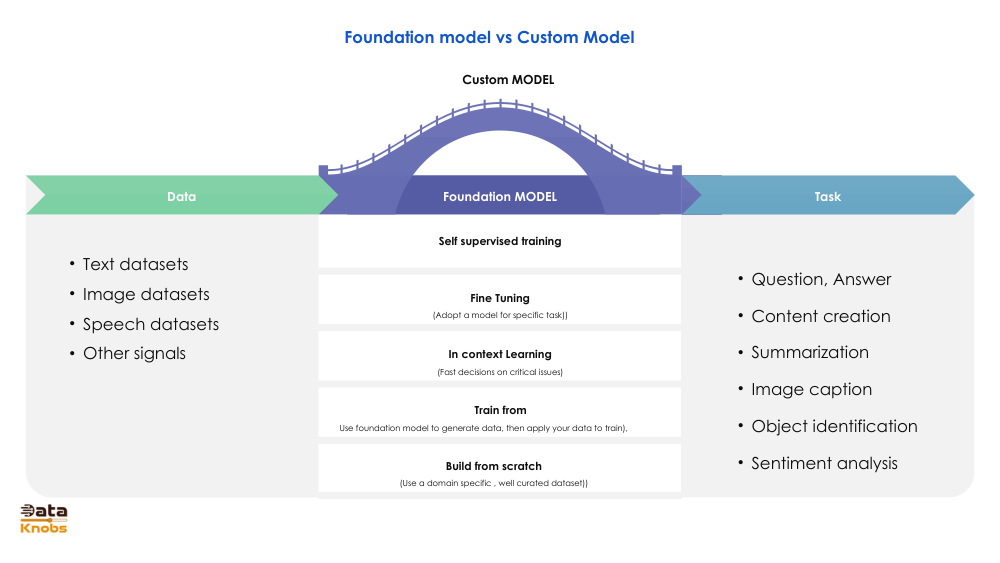

Slide 35 illustrates how Generative AI models transform raw data into meaningful outputs through statistical learning and pattern recognition. It highlights the core workflow: training on vast datasets, learning internal representations, and generating new content that resembles the training distribution.

Models learn abstract representations (features) rather than manually programmed rules.

A mathematical space where complex data (text, images) is encoded into structured vectors.

Models sample from learned distributions to create new text, images, audio, and more.

Text, images, audio, or mixed datasets.

Neural networks learn patterns and representations.

Content is mapped into a compressed vector space.

New data is created based on learned distributions.

Chatbots, summaries, rewriting, content creation.

Concept art, product imagery, visuals on demand.

Synthetic samples for improving model training.

It enables smooth manipulation of concepts and high‑quality generation.

High-quality models learn generalized patterns, not specific memorized examples.

The ability to recombine learned patterns into new, coherent outputs.

Explore deeper topics, hands-on demos, and advanced model architectures.

View More Tutorials