Generative AI – Slide 29 Explained

A clear explanation of the concept shown on Slide 29 with examples, applications, and technical insights.

A clear explanation of the concept shown on Slide 29 with examples, applications, and technical insights.

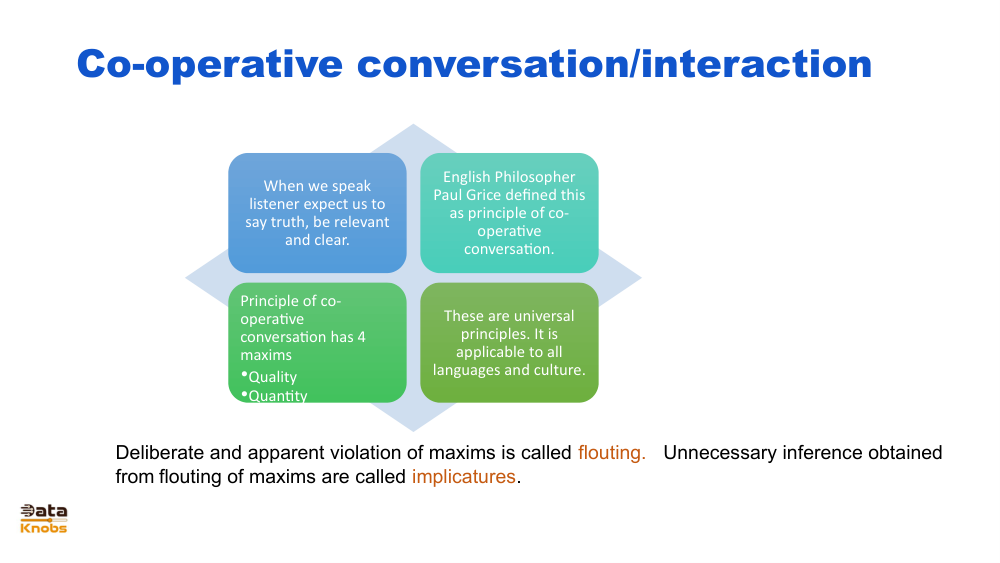

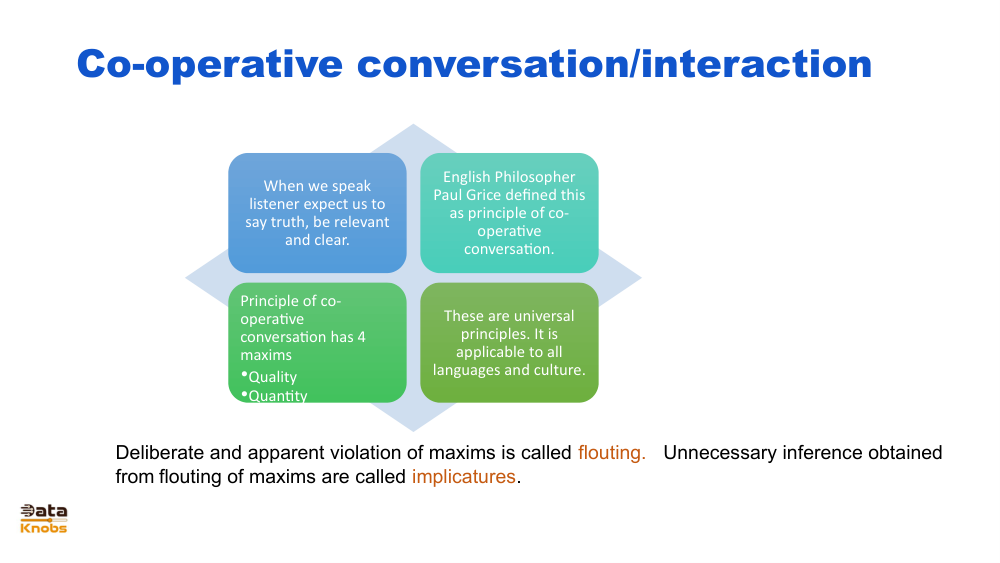

Slide 29 illustrates how generative models transform input data into new, contextually relevant outputs. This slide typically highlights concepts like latent space, model inference, and how prompts or conditions guide generation.

The model receives instructions, examples, or data that shape its output.

Data is encoded into a compressed representation where relationships and patterns are captured.

The model decodes latent information into text, images, audio, or other generated forms.

User prompt or sample data.

Translate input into latent vectors.

Model creates new content based on learned patterns.

Final text, image, or design.

Generate blog posts, marketing content, lesson plans, or scripts.

Produce illustrations, product mockups, concept art, and design variations.

Generate synthetic data to improve model training.

Model scenarios, generate prototypes, and simulate outcomes.

Slide 29 represents how neural networks perform generative tasks using a pipeline of encoding, transformation, and decoding. Large Language Models (LLMs) and diffusion models rely on billions of parameters trained on massive datasets to learn statistical relationships. During inference:

It shows how input transforms through generative processes into new outputs.

It encodes compressed semantic meaning for efficient generation.

Prompts guide the direction, constraints, and desired style of the generated content.

Explore more slides and tutorials to deepen your understanding.

View Next Slide